Projects

Explore my portfolio of projects across different technologies.

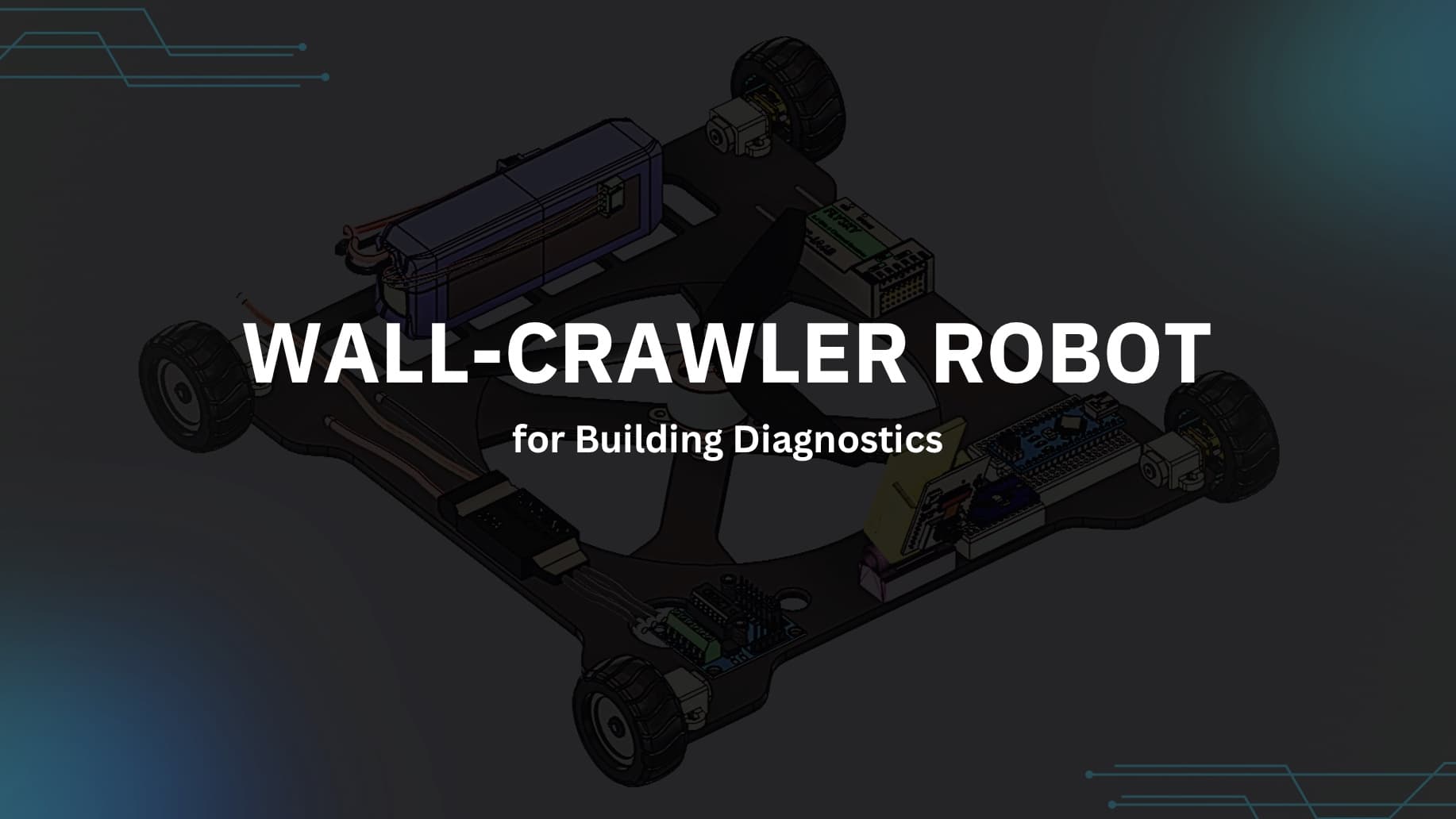

A Crack-Detection Wall-Climbing Robot

Mechatronics capstone — an autonomous robot that climbs vertical building surfaces using propeller-based adhesion, streams live images to a Django server, and classifies structural cracks in real time using a TensorFlow model.

┌─────────────┐ I2C ┌─────────────────┐

│ MPU9250 │──────────► │

│ 9-DOF IMU │ │ Arduino Nano │

├─────────────┤ I2C │ │ UART "IMU_roll_pitch_yaw_elev"

│ BMP280 │──────────► │──────────────────────────────────►

│ Altimeter │ └─────────────────┘ │

└─────────────┘ ▼

┌──────────────────┐

│ ESP32-CAM │

│ WiFi + Camera │

└────────┬─────────┘

│

HTTP POST /upload/

filename: IMU_-2.3_88.1_0.0_124.jpg

│

▼

┌────────────────────────┐

│ Django Server │

│ │

│ captured_images/ │

│ │ │

│ AI Thread (5s poll) │

│ TensorFlow Keras │

│ 120×120 RGB │

│ │ │

│ ┌──────┴──────┐ │

│ │ │ │

│crack_images/ no_crack/ │

│ │

│ Bootstrap Gallery │

│ (auto-refresh 2s) │

└────────────────────────┘

The Arduino Nano reads an MPU9250 (roll, pitch, yaw) and a BMP280 (relative altitude). Orientation uses a complementary filter — gyroscope integration corrected by the accelerometer on every tick.

// Complementary filter — blends gyro integration with accel-derived angle

const float alpha = 0.96f;

roll = alpha * lastRoll + (1.0f - alpha) * accelRoll;

pitch = alpha * lastPitch + (1.0f - alpha) * accelPitch;

Every 2 seconds, the Arduino formats all four values into a single UART string and sends it to the ESP32-CAM:

IMU_<roll>_<pitch>_<yaw>_<elevation>

Example: IMU_-2.3_88.1_0.0_124

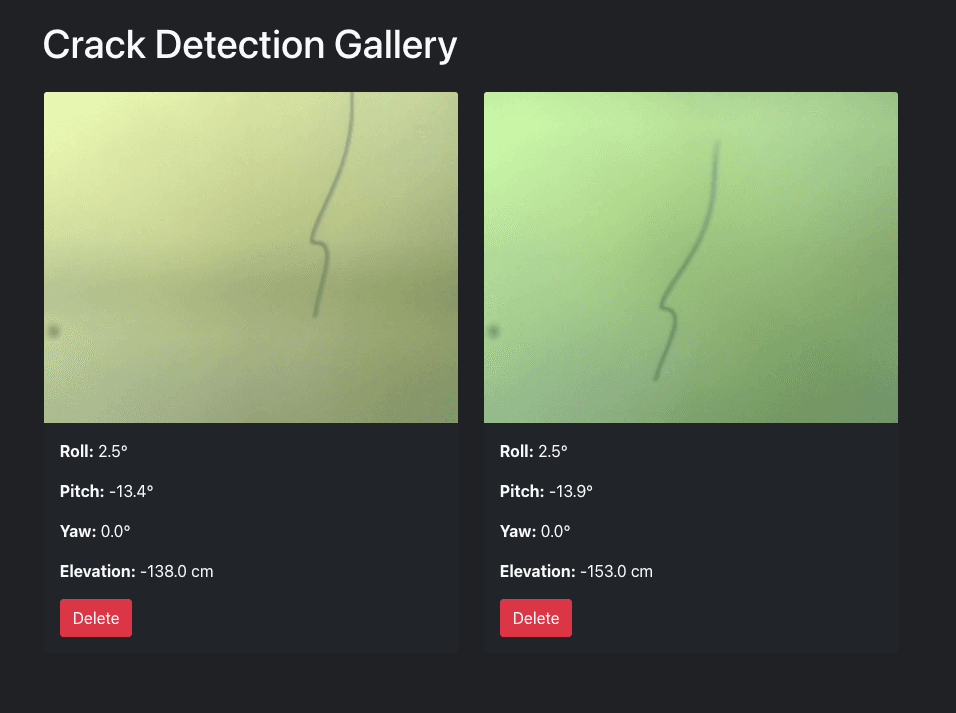

Rather than making a separate API call for metadata, the sensor string becomes the JPEG filename. The ESP32-CAM captures a frame and POSTs it with that name:

POST /upload/ HTTP/1.1

Content-Type: multipart/form-data; boundary=dataMarker

--dataMarker

Content-Disposition: form-data; name="imageFile";

filename="IMU_-2.3_88.1_0.0_124.jpg"

Content-Type: image/jpeg

[JPEG binary — streamed in 1024-byte chunks]

Django's gallery view recovers the sensor values with a single split — no database joins needed:

def parse_image_data(filename):

data = filename.replace('IMU_', '').replace('.jpg', '').split('_')

return {

'roll': float(data[0]),

'pitch': float(data[1]),

'yaw': float(data[2]),

'elevation': float(data[3])

}

A TensorFlow inference thread starts automatically when Django boots — no manual management needed:

# apps.py — fires once on startup

class CameraAppConfig(AppConfig):

def ready(self):

import os

if os.environ.get('RUN_MAIN', None) != 'true':

thread = threading.Thread(target=ai_monitoring.start_monitoring)

thread.daemon = True

thread.start()

The monitoring loop runs every 5 seconds:

captured_images/*.jpg

│

▼ ImageDataGenerator(rescale=1./255)

│ flow_from_dataframe(target_size=(120,120), color_mode='rgb')

│

▼ model.predict()

│

├── score > 0.5 ──► crack_images/

└── score ≤ 0.5 ──► no_crack_images/

The frontend auto-refreshes without WebSockets: jQuery polls /count-photos/ every 2 seconds and re-fetches the HTML fragment only when the count changes. Each card renders all four sensor fields parsed from the filename.

Before finalizing hardware gains, I simulated the wall-angle transition (0° horizontal → 90° vertical) in Python. The controller output scales motor thrust based on angle error.

class WallCrawlerController:

# Robot: 650 g, friction μ = 0.6, max thrust = 13 N

def calculate_required_thrust(self, angle_deg):

angle = np.radians(angle_deg)

mg = self.mass * self.g

return mg * np.sin(angle) * np.sqrt(1 + self.friction_coef**2) * 1.2

Simulation results — four gain combinations:

| Kp | Ki | Kd | Steady-State Error |

|---|---|---|---|

| 1.8 | 0.12 | 0.35 | 4.43° |

| 2.0 | 0.10 | 0.40 | 4.23° |

| 1.5 | 0.08 | 0.30 | 4.48° |

| 1.2 | 0.05 | 0.25 | 4.35° |

| Part | Role |

|---|---|

| Arduino Nano | Sensor reading + UART trigger |

| MPU9250 | Roll / pitch / yaw (9-DOF IMU) |

| BMP280 | Relative altitude in cm |

| ESP32-CAM | JPEG capture + WiFi HTTP POST |

| Propeller motors | Negative-pressure wall adhesion |

| Drive motors | Directional movement on surface |